Artificial Intelligence Turns Simple Text into Realistic Building Designs

Researchers develop a smarter image-generation system that produces realistic building designs with correct floor and facade details

Following the development of diffusion models that generate art and video from simple prompts, researchers at the Japan Advanced Institute of Science and Technology have created an artificial intelligence (AI) system that turns text descriptions into accurate architectural images. The model overcomes a major limitation in AI-design tools that only provide visual representations without structural accuracy. The system generates building images that follow structural rules, making AI tools more useful and reliable for architectural design.

When working on projects, architects must quickly turn rough concepts into visual representations. Text-to-image models offer an opportunity in this field, where high-quality designs can be generated simply by typing a description. Some of these systems can also incorporate rough sketches or depth information, offering additional control over the results. However, these models often fail to generate accurate representations of the prompt. For example, even a direct prompt such as "generate a 5-story building" might result in an image of a building with the incorrect number of floors. The reason lies in the training datasets, which lack detailed annotations about building structure, making it difficult for artificial intelligence (AI) to understand precise spatial requirements, such as floor counts or the exact placement of windows and facade elements.

Researchers at the Japan Advanced Institute of Science and Technology (JAIST) have now addressed these problems with a retrieval-augmented generation system that combines text prompts with information retrieved from external architectural datasets, enabling the model to reference real architectural examples during generation. Such a tool could set the groundwork for AI-generated architectural design tools that make the process easier and faster.

The work, published in the journal Frontiers of Architectural Research, was carried out by a collaborative team led by Associate Professor Haoran Xie from JAIST, together with Associate Professor Ye Zhang from Tianjin University, China.

"Today, high-quality architectural visualization requires significant expertise and expensive software. With the help of this work, individual designers and smaller teams will be able to participate meaningfully in the design of their own built environments, expressing preferences and seeing realistic results without needing a large professional team," said Dr. Xie.

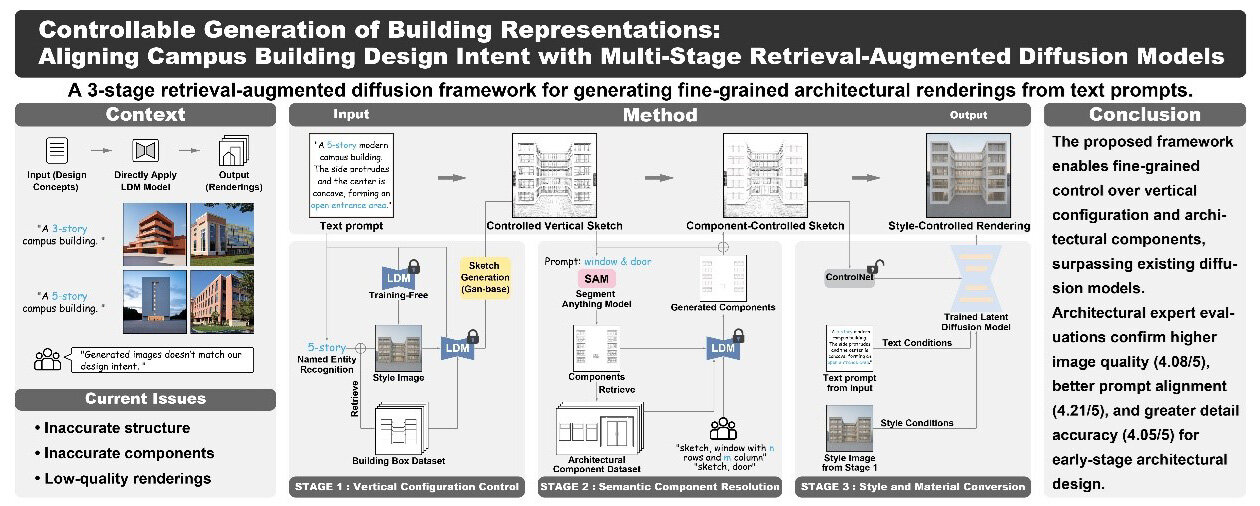

The team designed the framework to mirror real architectural practice. Architects typically begin with simple sketches that show the overall shape and layout of a building. Over time, these sketches are gradually refined with more detailed elements, such as windows, doors, and facade components. The new system follows this step-by-step process.

First, the system converts the text prompt into a simple structural sketch that captures the overall building form and ensures the correct number of floors. Next, it refines this sketch by adding detailed architectural elements using a database of real building components. Finally, the refined sketch is combined with the original text description to produce a realistic, high-quality building rendering that accurately reflects the designer's intent.

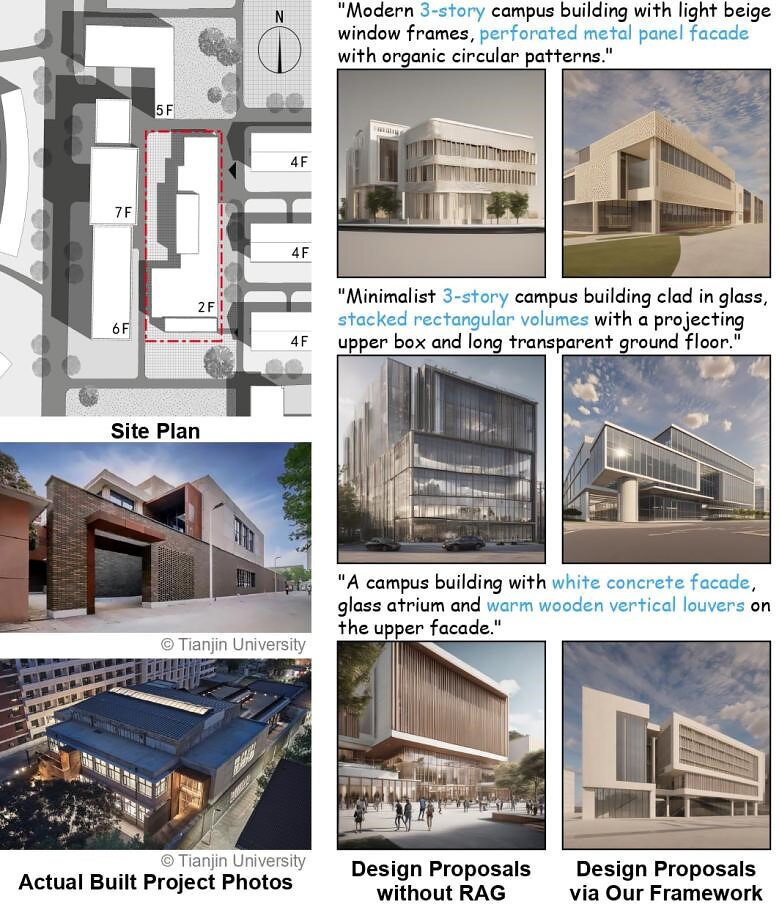

To evaluate the framework, the researchers tested it on campus building designs, where controlling the number of floors and the placement of windows and entrances is especially important.

They constructed three specialized datasets: a building box dataset containing 2,200 images, a component dataset with 4,000 images showing different window and entrance arrangements, and a sketch-rendering pair dataset with 1,600 examples linking detailed sketches, text prompts, and final campus building renderings.

In objective evaluations, the framework achieved 70.5% accuracy in vertical configuration and outperformed baseline diffusion models on several quality metrics measuring structural accuracy, visual realism, and alignment between generated images and text prompts.

The results were further supported by a subjective study involving 56 graduate students in architecture and design. Using a five-point Likert scale, where 1 indicated "very dissatisfied" and 5 indicated "very satisfied," participants gave the system average scores above 4 for image quality, alignment with prompts, and architectural detail accuracy.

Such a system could significantly improve early-stage architectural design workflows. "Designers can use it to quickly revise schemes in response to client feedback during meetings, dramatically shortening the design iteration cycle. Planners and developers can use the tool to visualize and compare dozens of design alternatives under shared constraints before any detailed modeling begins," explained Dr. Xie.

As AI continues to evolve, tools like this could make architectural visualization quicker, more accessible, and more reliable.

Image 1:

Image title: Retrieval-augmented generation (RAG) architectural design system

Image caption: The figure shows how the proposed framework turns a text description of a building into a realistic architectural image, step by step. First, the system uses the text prompt to generate a simple structural sketch that captures the overall shape of the building, including the correct number of floors. Next, the sketch is refined by adding detailed architectural elements, such as windows and doors, using a database of real building components as reference. Finally, the refined sketch is combined with the original text description to produce a high-quality, realistic building image that matches the designer's intent.

Image credit: Associate Professor Haoran Xie from the Japan Advanced Institute of Science and Technology

Image source link: N/A

License type: Original content

Usage restrictions: Credit must be given to the creator.

Image 2:

Image title: Retrieval-augmented AI generates more realistic campus building façade designs

Image caption: This figure demonstrates how the proposed framework was applied to a real campus building design project. The left column shows the actual site plan and photographs of the completed building, provided by Tianjin University (reproduced with permission). The middle column shows building façade designs generated by a standard artificial intelligence (AI) model without our retrieval-augmented approach, while the right column shows designs produced by our framework. By comparing the two sets of results, it is clear that our framework can generate building designs that better match the specific style and constraints of a real campus environment, more closely reflecting what an architect would intend to build.

Image credit: Associate Professor Haoran Xie from the Japan Advanced Institute of Science and Technology

Image source link: N/A

License type: Original content

Usage restrictions: Credit must be given to the creator.

Reference

| Title of original paper: | Controllable Generation of Building Representations: Aligning Campus Building Design Intent with Multi-Stage Retrieval-Augmented Diffusion Models |

| Authors: | Zhengyang Wang, Yuxiao Ren, Hao Jin, Jieli Feng, Xusheng Du, Ye Zhang*, and Haoran Xie* |

| Journal: | Frontiers of Architectural Research |

| DOI: | 10.1016/j.foar.2026.01.018 |

Additional information for EurekAlert

| Latest Article Publication Date: | March 26, 2026 |

| Method of Research: | Computational simulation/modeling |

| Subject of Research: | Not applicable |

| Conflicts of Interest Statement: | N/A |

Funding information

This work was supported by the JST BOOST Program, Japan, Grant Number JPMJBY24D6, and the National Natural Science Foundation of China, Grant Number 52508023.

March 31, 2026