Robotics Laboratory

Robot-Assisted Ultrasound Scan System

Introduction

Entering the 21st century, population aging is becoming a serious problem for many countries. Taking Japan as an example, according to Japan Statistical Yearbook 2023 released by the Ministry of Internal Affairs and Communications, Japan's population aged 65 and over currently stands at just over 36.21 million, accounting for 28.9\% of the total population. This number is still on its way to the peak. One of the most challenging tasks brought about by population aging is that senior citizens require regular and continuous health support and/or monitoring. To make sure senior citizens can get medical help timely and easily, besides medical facilities, diagnosing methods in the caring center or personal residence are also in need. Moreover, the popularity of personal medical inspection devices will bring convenience to other citizens as well. With the help of those devices, anyone in need can do simple physical checks to monitor their health condition just within their residence. For example, people with limited mobility or pregnant mothers will not bother traveling far to the hospital to get their regular physical check.

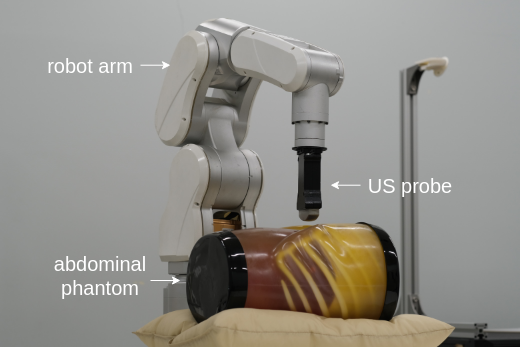

The demand for real-time/convenient health monitoring requires remote or portable inspection devices to be popularized. In clinical practice, US imaging is one of the most commonly implemented imaging modalities. Because it is approachable, effective, informative, and of low cost, US devices are easy to be implemented. Also benefiting from its non-invasive and non-radioactive nature, the operation of US devices is of low threshold. Medical US imaging requires an accurate delineation or segmentation of different anatomical structures for various purposes. For example, doctors can make a naive health condition preview by assessing the organ size. In some surgeries, precise US image segmentation can provide guidance for the interventions. However, in contrast to US devices' convenience in use, US images are hard to process because of low contrast, acoustic shadows, and speckles, to name a few. Even experienced doctors consider it a challenging task to tell the accurate contour of various organs and tissues. To realize a robust computer-aided diagnosis system, an automated and robust US image segmentation method is expected to help with locating and measuring important clinical information. Along these lines, we are developing a control algorithm for the robot arm to perform automatic US scans. As this system is expected to operate without human intervention, an evaluation metric for the robot's movement is necessary. Besides resolution and clarity, the integrity of anatomical structures is important as well. To this end, a segmentation algorithm needs to be incorporated into the robot trajectory control system. US images derived from abdominal scanning are our primary research target. In clinical practice, doctors can leverage abdominal US scanning images to evaluate health conditions of various anatomical structures.

Ultrasound Segmentation Model Based on Feature Pyramid Network and Spatial Recurrent Neural Network

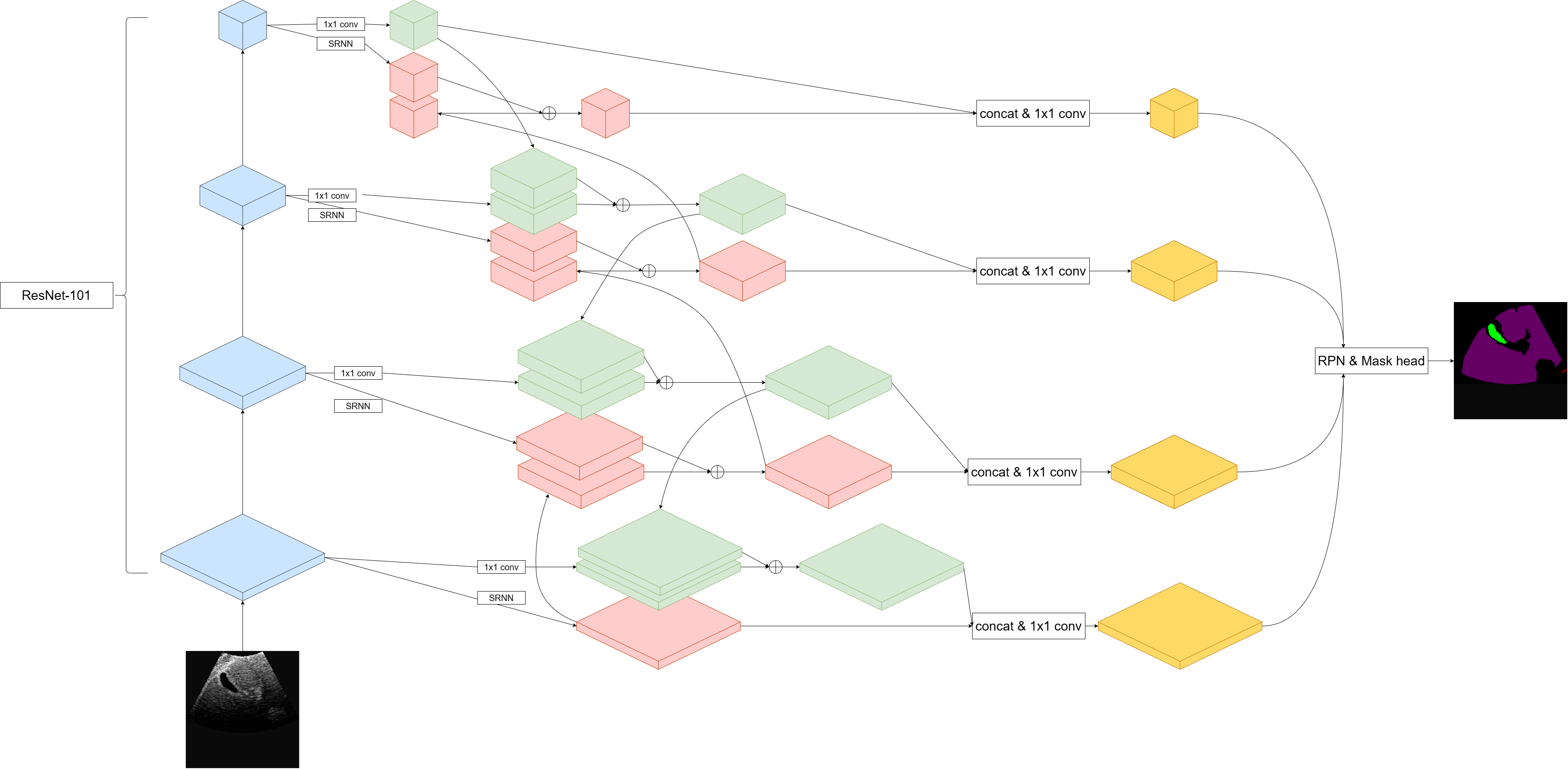

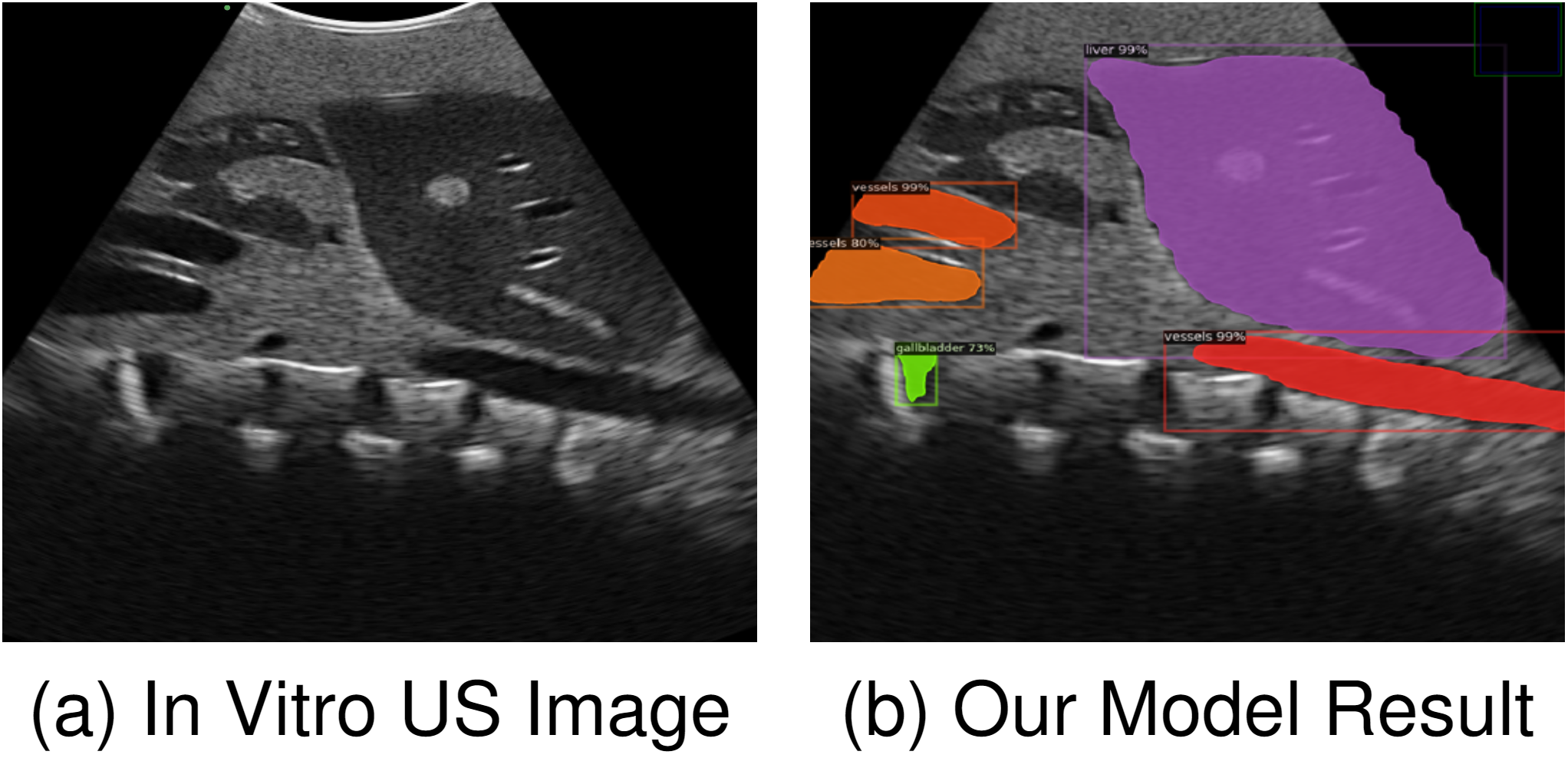

Segmentation of ultrasound (US) images forms the cornerstone of end-to-end smart diagnosis systems. As for the diagnosis stage, in one US image, specified diagnosis programs can be used on those well-cropped sub-regions according to different medical interests. In this work, we propose and test a neural network model that can perform end-to-end segmentation on abdominal US images targeting at 5 different anatomical structures (liver, kidney, vessels, gallbladder, and spleen). The primary contribution of this work is to explore the field of multi-organ/tissue segmentation. Compared with the previous research, this work considers two inherent features of US images: (1) different organs and tissues vary greatly in spatial sizes, (2) the anatomical structures inside human body form a relatively constant spatial relationship. Based on those two ideas, we propose a new image segmentation model combining feature pyramid network (FPN) and spatial recurrent neural network (SRNN). In the paper, we present FPN to extract anatomical structures of different scales and implement SRNN to extract the spatial context features in abdominal US images. There are both top-down and bottom-up pathways for the enhancement of both semantic features and spatial context features, which is called ``two-path augmented''. Besides, we added a direction attention mechanism to selectively leverage spatial context information from four principle directions. That is where ``directional context aware'' comes from.

Segmentation Performance

We have tested our model both quantitatively and qualitatively. The result shows that our model has outperomed the pure FPN model and the performance is competitive even compared with those well-targeted designs.

Reference List(as of June 2024)

- Song, Yuhan, Armagan Elibol, and Nak Young Chong. "Abdominal Multi-Organ Segmentation Based on Feature Pyramid Network and Spatial Recurrent Neural Network." IFAC-PapersOnLine 56.2 (2023): 3001-3008.

- Song, Yuhan, Armagan Elibol, and Nak Young Chong. "Two-path augmented directional context aware ultrasound image segmentation." 2023 IEEE International Conference on Mechatronics and Automation (ICMA). IEEE, 2023.

- Song, Yuhan, Armagan Elibol, and Nak Young Chong. "Abdominal multi-organ segmentation using multi-scale and context-aware neural networks." IFAC Journal of Systems and Control 27 (2024): 100249.